Ask a good server engineer where their server configuration is defined and the answer will likely be something similar to

In my Puppet manifests.

Ask a network administrator the same thing about the network devices and they'll probably look at you in confusion. Likely responses may include:

Uh, the device configuration is on the device, of course.

We take config backups every day!

Why is it that the server team seems to have gotten their act together while the network team is still working the same way they were twenty years ago?

The Device As The Master Configuration Source

To clarify the issue described, for many companies, the instantiation of the company's

network policy

is the configuration currently active on the network devices. To understand a full security policy, it's necessary to look at the config on a firewall. To review load balancer VIP configurations, one would log into the load balancer and view the VIPs. There's nothing wrong with that, as such, except that by viewing the configuration on a running device, we see what the configuration

is, not what it was

intended it to be.

Think about that for a moment: taking daily backups of a device configuration tells us absolutely nothing about what we had intended for the policy to be; rather, it's just a series of snapshots of the current implemented configuration. Unless an additional step is taken to compare each config snapshot against some record of the intended policy, errors (and malicious changes) will simply be perpetuated as the new

latest

configuration for a device.

Contrast this to a Linux server managed by, for example, Puppet. The server team can define a policy saying that the server should run Perl v5.10.1, and code that into a Puppet manifest. A user with appropriate permissions may decide that for some code they are writing, they need to have Perl v5.16.1, so they install the new version, overwriting the old one. In the network world, a daily backup of the server config would now include Perl 5.16.1 and from then on that would implicitly be the version of Perl running on that device, even though that wasn't the owning team's intent. Puppet, on the other hand, runs periodically and checks the policy (as represented by the manifest) against what's running on the the device itself. When the Perl version is checked, the discrepancy will be identified, and Puppet will automatically restore v5.10.1 because that's the version specified in the policy. If the server itself dies, all a replacement server really needs is to load the OS with a basic configuration and a Puppet agent, and all the policies defined in the manifest can be instantiated on the new server just as they were on the old server. The main takeaways are that the running configuration is just an instantiation of the policy, and the running configuration is checked regularly to ensure that it is still an accurate representation of that policy.

Let's Run The Network On Puppet!

Ok, nice idea, but let's not get too far ahead of ourselves here. Puppet requires an agent to run on the device. This is easy to do on a server operating system, but many network devices run a proprietary OS, or limit access to the system sufficiently that it wouldn't be possible to install an agent (there are some notable exceptions to this). Even if a device offers a Puppet agent, creating the configuration manifests may not be straightforward, and will certainly require network engineers learning a new skillset.

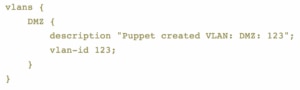

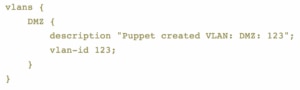

Picking on Junos OS as an example, the standard Puppet library supports the configuration of physical interfaces, VLANs, LAGs, and layer 2 switching, and, well, that's it. Of course, there's something deeper here worth considering: the same manifest configuration works on an EX and an MX, despite the fact that the implemented configs will look different, and that's quite a benefit. For example, consider this snippet of a manifest:

On a Juniper EX switch, this would result in configuration similar to this;

On a Juniper MX router, the configuration created by the manifest is quite different:

The trade-off for learning the syntax for the Puppet manifest is that the one syntax can be applied to any platform supporting VLANs, without needing to worry about whether the device uses VLANs or bridge-domains. Now if this could be supported on every Juniper device and OS version and the general manifest configuration could be made to apply to multiple vendors as well, that would be very helpful.

Programmability

A manifest in this instance is a text file. Text files are easy for a script to create and edit, which makes automating the changes to these files relatively straightforward. Certainly compared to managing the process of logging into a device and issuing commands directly, creating a text file containing an updated manifest seems fairly trivial, and this may open the door to more automated configuration than might otherwise be possible.

Centralized Configuration Policy

Puppet has been used as an example above, but that does not imply that Puppet is the (only) solution to this problem; it's just one way to push out a policy manifest and ensure that the instantiated configuration matches what's defined by the policy. The main point is that as network engineers, we need to be looking at how we can migrate our configurations from a manual, vendor- (and even platform-) specific system to one which allows the key elements to be defined centrally, deployed (instantiated) to the target device, and for that configuration to be regularly validated against the master policy.

It's extremely difficult and, I suspect, risky, to jump in and attempt to deploy an entire configuration this way. Instead, maybe it's possible to pick something simple, like interface configurations or VLAN definitions, and seeing if those elements can be moved to a centralized location while the rest of the configuration is

on-device.

Over time, as confidence increases, additional parts of the configuration can be pulled into the policy manifest (or repository).

Roadblocks and Traffic Jams

There's one big issue with moving entire configurations into a centralized repo, which is that each vendor offers different ways to remotely configure the devices, some methods do not offer full coverage of the configuration syntax available via the CLI (I'm squinting at you, Cisco), and some operating systems are much more amenable to receiving and seamlessly (i.e., without disruption) applying configuration patches than others. Network device vendors are notoriously slow to make progress when it comes to network management, at least where it doesn't allow them to charge for their own solution to a problem, and developing a single configuration mechanism which could be applied to devices from all vendors is a non-trivial challenge (cf:

OpenConfig). Nonetheless, we owe it to ourselves to keep nagging our vendors to make serious progress in this area and keep it high on the radar. When I look at trying to implement this kind of centralized configuration across my own company's range and age of hardware models and vendors, my head spins. We have to have a consistent way to configure our network devices, and given that most companies keep network devices for a least a few years, even if that was implemented today, it would still be 3-4 years before every device in a network supported that configuration mechanism.

On a more positive note, however, I will raise a glass to Juniper for being perhaps the most

netdev friendly

network device vendor for a number of years now, and I will nod respectfully in the direction of Cumulus Networks who have kept their configurations as

Unix standard

as possible within the underlying Linux OS, thus opening them up to configuration via existing server configuration tools.

Looking for a network monitoring solution? Download a free trial of SolarWinds® Network Performance Monitor.  On a Juniper EX switch, this would result in configuration similar to this;

On a Juniper EX switch, this would result in configuration similar to this;

On a Juniper MX router, the configuration created by the manifest is quite different:

On a Juniper MX router, the configuration created by the manifest is quite different:

The trade-off for learning the syntax for the Puppet manifest is that the one syntax can be applied to any platform supporting VLANs, without needing to worry about whether the device uses VLANs or bridge-domains. Now if this could be supported on every Juniper device and OS version and the general manifest configuration could be made to apply to multiple vendors as well, that would be very helpful.

The trade-off for learning the syntax for the Puppet manifest is that the one syntax can be applied to any platform supporting VLANs, without needing to worry about whether the device uses VLANs or bridge-domains. Now if this could be supported on every Juniper device and OS version and the general manifest configuration could be made to apply to multiple vendors as well, that would be very helpful.