In this episode, Head Geek and overall database guru Thomas LaRock will lead a discussion of the newest addition to the SolarWinds database performance monitoring family–VividCortex. Thomas will be joined by Hansel Akers, the sales engineering team lead for VividCortex, to discuss how this tool helps leading companies like Yelp and SendGrid deploy and optimize their open-source database applications. Thomas will talk about the differences between traditional database applications and modern applications built on microservices and open source systems, including no-SQL databases. Hansel has worked with dozens of VividCortex customers, and will share insights into how they use the tool to ensure optimal application performance.

VividCortex is a SaaS-based solution supporting a variety of cloud and on-premises databases including MongoDB, PostgreSQL, and others. Hansel and Thomas will walk through the architecture of VividCortex, demo the product, and make some comparisons with DPA. They’ll also hold a Q&A session at the end of the episode.

Episode Transcript

Hi there, welcome to SolarWinds Lab Live. Yes, we are live. This is not a recording. So all the mistakes you hear me say over the next half hour or so, you hear in real time. My name is Thomas LaRock and with me is Hansel Akers.

Hi everyone. Thanks for having me.

Glad to have you here. As you know, today’s episode is gonna focus on Database Performance Monitor. And before I get into that, I wanna mention again, live, we have a chat room, so ask your questions and then Hansel and I can answer them right at the end of this broadcast. So if you aren’t logged in you have to be logged in. So there must be a chat window there. So again, ask your questions, get them ready for us. We have moderators, if the moderators aren’t able to answer your question right away, they’ll toss it to us to answer later live. OK, so guess what? We have something in common. Do you know what that is? Actually, we have a couple of things in common. Besides the fact that neither one of us need an apple box. Let’s talk about how I came to SolarWinds through the acquisition of a little company called Confio Software that made a product called Ignite. You came to us through the acquisition of VividCortex in December. And that was also the name of the product, the name of the company and the product were the same, VividCortex. So that’s one thing in common, we are here because our companies got acquired and they made database performance monitoring software. The other thing in common, is the first thing that happened after we joined is they renamed our products. So Ignite is now Database Performance Analyzer the product, you know, and love. And VividCortex is now Database Performance Monitor. And today we’re gonna help you understand a little bit about the difference between those two and why one would suit you better than the other. So first, before we get into all that. Hansel, tell me a little bit about what you were doing for VividCortex. What your role was there.

Sure. And you’ll probably hear a lot of DPM in reference to Database Performance Monitor. Nice acronym, but thanks Tom. Yeah, I’ve been at VividCortex now for a little over a year. I started as actually the second sales engineer that was hired. And then moved into sort of a managerial role. So I manage a team now. We doubled in size of four, three rock stars and me somehow managing that team. But yeah, so I manage that team. I make sure that we support our sales team first and foremost. And make sure that our customers realize value.

And you said how long you’ve been with VividCortex?

A little over a year now.

A little over a year now. Oh wow. So you guys have grown quite a bit in just a short amount of time.

We have.

So in the time you’ve been here since December, have you noticed say been able to take advantage of a lot of the resources that SolarWinds has to offer?

Definitely. I’ve been blown away by the resources. You know, from day one, even like the acquisition team coming and surprising us on site in Charlottesville, Virginia. It’s been incredible, the support. The people we’ve talked to and has just like been continually giving us support. It’s been awesome.

So well I know this is, when we got bought was one of the first things was the ability to get a lot more development time. Cause there’s only so many developers you have in your company. But when you come to the SolarWinds, you find that you can actually have access to a lot more resources. And what that means is new features, the stuff that you had on that wishlist that you wanted to get into the product now you can get there. And over time we’ve built things like, the anomaly detection with machine learning. I’m not sure we would’ve ever done that if we had stayed just Confio Software. SolarWinds just has a ton of resources to put behind these products and help us get stuff done. Now let’s talk a little bit about, one of the key differences between DPA and DPM. So DPA traditionally is going to to do your relational database management system. So we’re talking your favorite, your Microsoft SQL Server. Also, you know, Oracle, Sybase – I’m sorry, the artist formerly known as Sybase, that’s your SAP. Some of the big players in the space, right? That’s what we focus on. We also do some MySQL, but again, relational database. But DPM doesn’t really focus on relational databases. You wanna talk a little bit about that?

Sure. So, at a high level, DPM is a database performance monitoring software solution. And we support today MySQL, Postgres, MongoDB, Redis, and Aurora databases, Aurora database types. And that includes both locally hosted and cloud hosted, managed and self-managed. So today we’re kind of covering that. Mostly kind of what we’re seeing today is a lot of MySQL. That was kind of the first database type we supported. Our founder Baron Schwartz is well known in the industry, was a lead author of High Performance MySQL, so it’s kind of our baby. He has a great following. I think that’s what led to kind of the growth there. And then of course we’re seeing a lot more growth in Postgres and MongoDB recently.

Let’s talk a little bit about that growth. We actually have a chart here from the DB-Engines Ranking. Erik, you can bring that up there. Perfect. So you can see at the top the, and this goes back seven years. You can see the legend on the bottom. You can see at the top there, Your Oracle, the MySQL, which is owned by Oracle, and then Microsoft SQL Server. But then look at four and five, right? You got your PostgreSQL, you got your MongoDB. Like you mentioned, you’re seeing a lot of that in like say use it by your customers.

Yeah, it’s definitely becoming a lot more consistent. We’re hearing a lot of calls, hearing a lot of migrations to Postgres. And then of course everyone knows Mongo took off pretty quickly right away, right off the bat.

And just looking at the graph, you can see how those two, the orange and the first blue one there PostgreSQL and Mongo, how they are continuing to climb, while those top three have kind of stayed steady. Like those are your old enterprise relational database systems. They’re entrenched. They continue to have a large share of the market. But you see the rise in the popularity of PostgreSQL and MongoDB. I come across it all the time. Anytime I’m touching some data science stuff, some Python code, they’re always talking about how easy it is for you to use PostgreSQL and to get this stuff up and running. You had mentioned how you’re a sales engineer, what did you do before you came into VividCortex?

So I was working for another startup, in Philadelphia. I’m located in Philadelphia. And we were doing basically started as an MBaaS turn into PaaS and healthcare space. So we were basically an interoperability platform for connecting healthcare, data sources, so EMRs and IoT devices, that kind of thing.

Oh, that sounds good. So you were using a lot of tools that you maybe you bought or you built yourself?

It was all built. We were built on top of AWS, but we had a platform we built ourselves and actually it was built on Mongo.

It was built on Mongo. So my previous background, I was a data janitor. Well, database administrator, same difference. And of course my world is mostly relational databases, so Oracle, Sybase, and a lot of SQL Server. So when I jumped and joined Confio, I had a pretty good understanding of that customer base because I was a customer actually before I joined the company. Is that somewhat your experience as well? So you were using a lot of these open-source platforms and then when you jumped to VividCortex, like you had some experience in trying to tune that?

So yeah, way back when, even before that most recent stint with the healthcare startup. I was in the healthcare space. So I was working pretty closely with some SQL back ends there. And then Mongo more recently with… it was called Cloud Nine the company.

And so the customer base for DPM then is really the people that are using a lot of PostgreSQL and Mongo, right?

Right.

So let’s jump to another slide a little bit. I wanna talk about the architecture of DPM. So let’s talk a little bit about this. I’ve got some questions and comments about what I’ve read, some of the great stuff that Baron has written. But maybe you could walk us through a little bit about how DPM gets installed up and running.

Sure, at a high level it’s an agent-based installation, with a web-based UI. So we are hosted on AWS. And the data capture is down to the second in granularity. But what this is showing us, again, I mentioned agent-based install and we kind of separate it into two boxes here. And the agent’s vary customizable and how we configure it. But we can either install what we call on-host it means we’re installing it on the database instance that we wanna monitor or we intend to monitor. And we can also install it off hosts. So for those managed services like RDS, Aurora, Cloud SQL, for GCP, and then Azure Database for MySQL and PostgreSQL in the Azure space. We install alongside of it. And what we can do is rely on existing informational views, like enabling performance game or PG stat statements to pull in all of our metrics. So that’s what we’re kinda showing here either, that left side, I’m installing directly on, and then that right side, we’re gonna install alongside and then rely on some of the existing tables that we can pull from.

Now, of course, at the top we have that big picture of a cloud, right? So one of the key differentiators between the two products is that DPM is software as a service. This is hosted, this is nothing. You’re gonna install an agent to do collection. But the actual application itself is something that is hosted, like you said in AWS, whereas DPA is something you would manage yourself. Now you can run us in AWS or in Azure, you can deploy us through the marketplace, DPA through the marketplace or you could host it yourself, but it’s not software as a service. So that’s a real key differentiator between the platforms being offered for support. It’s also how’s the service actually run?

And I mentioned those agents and their ability to be customized. You can flag them in certain ways to make sure that you know, only the data that you want leaving your environment, leaves your environment.

And what do you mean by that?

So we can flag it. As you know, today we’re capturing, we’ll get into this during the demo. But query samples, query text. You can make sure that you’re, you know, eliminating the capture of specific query text, or eliminating query text in general and just making sure we’re capturing samples with metadata and explain plans and that kind of thing. But right when you install it right from the get-go, you can be sure that you’re only gonna be sending what you wanna be sending.

So since you mentioned it. Enough talking, let’s start showing, all right, let’s start showing what DPM really looks like and you could walk us through the product. And I’ll just ask you some questions along the way.

OK, sounds good. So what we’re looking at here, and I’ll just kinda cover it at a high end here. But this is the summary page. That left-hand side is gonna be your Navigation Pane. So what we can do here is basically jump to all those pages that you’re gonna spend a lot of your time on. Along the top here. We can filter on our hosts. So this is a demo environment we have set up, we have some MySQL, we have some PostgreSQL, and we have some MongoDB in this environment. You can see we’re looking at 57 of 57 hosts right now. So by default, everything that I wanna have in this monitoring app. And we’re looking at the past hour. I’ll just call up by default, we will actually house 13 months worth of data. I mentioned down to the second in granularity. It kind of becomes less granular as we hold it and continue to kind of retain that data. But that’s configurable as well, so we can configure way beyond that. We have some customers doing three years and beyond. So if I want to jump up here and filter on a specific host, database instance, I can do that. I can filter on types of databases. So if I wanna look at, show me just my MySQL hosts, I can do that as well. I can also logically tag these to make sure I’m looking at only the database instances I wanna look at. But that’s kind of at a high level. I’m happy to jump in if you having no questions so far.

Well take us through some of the stuff on the left. Like I see Explorer, what does Explorer mean?

So I’ll jump to Explorer. what Explorer does, it’s gonna kind of give you this view of organizing rank, sort, filter all of our queries by total time. You see down here this bottom portion. And what I can do here, is graph this and I can also remove specific queries. So what we’re gonna do is rank by default, by the amount of time that’s being consumed and we’re gonna rank them that way so you can see that total time metric. And what I’m doing here, is I’m able to actually remove these and peel back these layers of specific queries. And then what we can do in addition to this, is stack charts on top of it. So if I wanna jump up here and look at, CPU utilization and see if I see a CPU spike, maybe I can identify a culprit, a specific query responsible for it. So not only organize the work the database is doing, but help correlate that across your resource metrics, your operating system metrics. There’s other database engine metrics that we’re able to pull in.

Now am I looking at something that’s for say all of everything that’s been collected. Like how do I know what particular node I’m looking at?

So right now we are looking at everything. So we’re looking at all of our nodes, all of our database instances, and that includes MySQL, it includes PostgreSQL, it includes Mongo. We can jump up here and use this global filter to make sure we’re only looking at a specific node that we wanna look at.

I wanted to ask about health.

Perfect. So we have a health page here, with best practices dashboard. So what we do is we’ll bucket those into criticality so you can see those critical warnings that we wanna address immediately. Warnings and then some more informational recommendations. You can see some of them listed here. For Mongo we’ll give some Index Analysis. So if we find some queries that could benefit from additional indexing, we’ll list them here. But this view is really gonna give you a nice, aggregate, list of, you know, kind of all of those best practice recommendations, whether it’s related to security. You can see some of the ones that we’ve passed here in this demo environment. And then some of this database engine the whole way down to query level where we have a custom-built query parser that will give you recommendations on how to make sure that your queries are the most performant they can be.

So if it’s gray that means it’s passed. I was gonna have you click on one I wanted to see actually the index. I’m curious about the index. Show me what that looks like.

Sure. So we can go into and from the Explorer from the profiler, which we’ll touch on in a little bit. We’ll be routed to query details if we click on any of those and it’ll give us a lot of additional metrics about a specific query. So here we’re looking at this, shardedcounters.find. And that’s gonna be like our normalized version of it. And what we can do is actually select any of these. These are sample executions that we’ve captured of this digested query. So I can select these. I can look at that actual sample text now that we’re pulling in. And as I scroll down a little bit further, we’ll get some metadata specific to the sample execution so we get an idea of exactly where it’s coming from you can see the IP here. This ran for 1.86 minutes. And then EXPLAIN PLAN that we mentioned that Index Analysis here, which we’ll pull up. Give us some additional information on how to improve this query.

So for latency, 1.86 minutes, what that means is from the user trying to execute, all the way down to the engine and the engine returning it back to the user. So that’s all layers rolled up.

So this would be, the database’s time for executing a period–

So just in the engine 1.86. And if you scroll down a little bit, I noticed there was the average latency which was 47. So that’s the average over the last hour, but for the one we drilled into, that one was a little bit higher, 1.86 minutes. So now it might be something you’d want to investigate further.

Exactly. You can see some for those samples that we are capturing we have, a lot of those that are kind of laying at the bottom. Those would be those faster frequent ones. And then we have these outliers here. So you know, these are probably the ones as you mentioned that we wanna drill into.

So I noticed alerts over on the left. I’m always interested in alerts.

Yes. So if I jump over to alerting, and I’ll show this in a health dashboard of a little bit as well, but we capture a lot of events just out of box. So whether that’d be like database configuration changes, approaching max MySQL connections are some examples. We have a pretty long list. You can alert quickly on those events. So I can select that event, I can choose the host that I wanna set up that alert on, or that event alert on. And then I can leverage some of our native integrations we support so typically–

All right scroll down. I wanna show those.

So here’s some of the ones that we have already set up in this demo environment. But what I’ll do is I’ll jump and show you the specific integrations that we support.

So we can email Nick. Nick, will fix–

We can, yeah. We can just send everything to Nick. So you can see today we support PagerDuty and Slack, Opsgenie, VictorOps, email, if you wanna send it to Nick. And then also a custom webhook. If it’s something we don’t support natively.

The custom webhook. I like that as well. That’s a nice add-on there.

And then the other thing I wanted to touch on for alerts, is also metric-based alerting and threshold-based alerting. So if we capture that metric, we can set up the alert, and quickly get an idea of exactly what we should be alerting on. For instance, the example we give there is CPU load average. You can see how it also autocompletes for me so I can make sure I’m finding the right metric. But when I get a nice little interactive graph here. So I can say let’s set up a threshold based alert when it exceeds 15 and recovers at 13 and we’ll be able to see exactly what we’re setting up there.

Oh, that is nice. I hadn’t seen that yet. That is very nice.

Nice interactive way to set it up.

So a being a Microsoft SQL Server person, I see the word “profiler.” What’s profiler look like?

So we got a little dose of it in the explorer. This is gonna rank, sort, and filter all of our queries running across all of our selected hosts. And by default we are looking at the most time-consuming queries. This is gonna lie to organize in a bunch of different ways. You can see we’re looking at top 20 queries by total time right now. And so, you know, that insert statement across all 57 hosts, is about 28% of our runtime or about right under nine hours of wall clock time in the past hour. And what we can do here is just quickly identify outliers. So in the past hour on average, show me my longest-running queries as well as those fast and frequents. So a lot of the folks using those custom scripts or maybe a slow query log, some of those would miss those fast and frequents, which of course can consume a good amount of time across my database.

So, and again, this is a all queries across all hosts, everything that’s been collected. So if I wanted to filter by a top filter of the host.

Yeah, let’s go and filter on, just type equal to MySQL. And we can make sure that we’re now looking at just 19 of those 57 hosts. And you can see that insert statement now that we just talked about, which was much lower is accounting for about 80% of total runtime for MySQL hosts.

That’s good.

Yeah, and you can see, we can again click on any of those and we’ll get to that additional metrics query details page. And you can see some of these, we actually have some failed warnings, best practice recommendations, where we can quickly jump into those and see what we can do.

I love the best practice recommendations and I’m gonna assume a lot of that knowledge came from Baron?

It did. You know, Baron, he built a lot of this and he did an excellent job. And then a lot of our CS team are just very talented. So they’ve build a lot of these recommendations and tools as well.

So one of the things that has been built into DPA is a lot of wait time response analysis. So all the relational database systems, they all have the concept of logging what the wait is for that particular query. Is there any waits inside of DPM?

So I think what we’ve done, and we looked at it a little bit earlier, is we kind of flip that on its head. So we kinda look at the top of the funnel. A spike in CPU, a spike in wait for a specific query. And then what we can do is try to identify the specific query that’s lining up with, say a spike in CPU or long wait times.

Excellent.

And then from there, drill down and look at some of those recommendations that we were just talking about.

The way I wanna put it is that, the data being collected in a lot of ways between the two tools is just very similar. It’s just how is the data being presented to the end user for consumption? Because both tools offer a great deal of observability into the layer, especially inside database engine, which is this mysterious unknown for a lot of people. So you’re getting the observability in that database layer and the data it’s kind of all the same. It’s all the same system views and information, and you know, the CPU of a host is the CPU of a host, it’s being used or not. It’s just a matter of how do you want to present that in a way for the audience to consume it so then they can take the right actions at the right time. And this is what I love about the tool is that it’s taking a lot of that information that says, no, let’s show it in this way, a way that is very familiar for that audience. Say the Devs, the DevOpsy folks. Cause they’re used to seeing this type of input, this is what they really want. So for us to be able to offer that, I think is just amazing. Let’s talk notebooks.

OK, let’s jump to notebooks.

This is one of my favorite features is the notebooks. So it’s sitting right there and I’ve been dying to ask about it.

So notebooks is gonna combine basically everything we’ve looked at so far into a dynamic document. So what we can do here, you see we have one that we’re hovered on now, but it’s gonna have basically links to different areas of the app. It’s going to have different dashboards that we can embed in these and we can make sure to export these and share them with the folks that need to be shared with. And then we have a bunch of other templates that we can use here. so for instance, we can look deployment runbooks. We can do code deploy checks, environment inspections and what this does, as soon as I kind of deploy this into a notebook, it’ll start populating from my environment so I can make sure to choose the right hosts, the right time ranges, so I can do a quick environment inspection and see how I’m performing over that selected time range.

So I have just come across notebooks say recently, last three years of my life as I’ve pivoted a little bit to being more data science, data analyst. And so I get to fool around a little bit and not just with Python but with Jupyter Notebooks. So when I saw this feature and I started to understand the real power behind it, the ability to share knowledge between silos. So everybody that you want can have access to this tool and this is kind of your runbook, this is kinda the idea that, Hey, this thing happened at this time. Let me show you and this is what I had to do to solve it and maybe we should go and fix it. Or now can we embed datasets inside the notebook as well?

You can, yes. So I know the one we discussed recently and yesterday was like post-mortems. So you’re paged in the middle of the night, I’m gonna put together a notebook, a runbook so that the next person doesn’t spend an hour like I spent, but maybe 10 minutes so they can resolve quicker. But you can see some of those. We have some of the ones that you’re mentioning like a runbook and some environment inspections. But if I scroll down a little bit further, let’s pick an exciting one here. You can see that this is gonna reflect a lot of different areas of the app where I can look, where I can route to. And then if I look at this environment inspection as well, you’ll see as I scroll down we’re gonna get those charts that we wanna look at, specific to our resources, specific to system performance. So all of this you can customize, you can embed, as long as you can do it in markdown, you basically can do it in notebook.

All right. So the question I have right now cause I haven’t asked it of you yet, is when you’re doing a demo of this tool, what’s the “aha” moment for the customer, when they’re looking at it going and what are you showing when the next question is how much does this cost?

So there is some really good workflows. I think something that every customer values is the ability to drill down so quickly. So I’m gonna profile my workload, I’m gonna look at them as time-consuming query and I’m gonna grab a sample and looking at EXPLAIN PLAN within two clicks. So that’s something that’s really just easy to use. You know, and then we have some other really, really useful workflows. I think something that people really like is the ability to compare time ranges. So this is something we show quite a bit. So for instance, if we wanna look at an hour over hour comparison, and look at the relative change across my entire workload, I can do that. So, you know, customers, and I know something you mentioned earlier is like DevOps, which is a very heavy role that spends a lot of time in here. So customers, is one of our largest customers GitHub. All their engineers will own their code. So when they deploy code, they all log in here and they make sure that, they’re not seeing anything they don’t anticipate. So in-app deployment would be a great use case here to compare time ranges and make sure that there’s nothing happening that they didn’t expect to see go on there.

So the employees, GitHub employees use DPM, in order to make sure that the code they deploy to run GitHub has no issues that they weren’t expecting.

Yeah, they all log in right after deployments. They know what parts they own and they know that what they need to pay attention to–

I didn’t know that. I think I’d heard GitHub was a customer, but I hadn’t really thought about how they were using it. Especially in that DevOps-type role. If it’s good enough for them, it’s gotta be good enough for, yeah.

They’re leading the charge; they do it really well.

So a question I have here is, Is it possible for me to compare just a couple of queries? I can see comparing a time range for an hour. But what if I wanted to focus a little bit further? How would I do that?

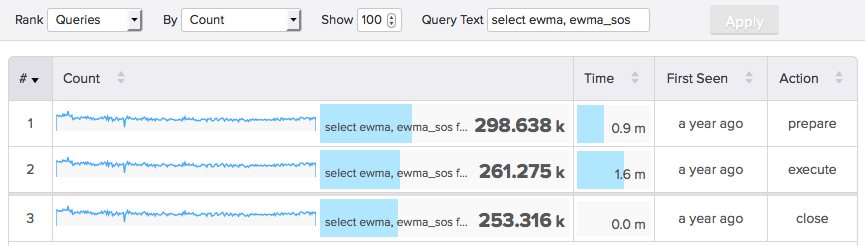

So within here there’s a lot of other datasets that we haven’t had time to look at yet. We can filter on specific query text. So we have a table in here called guestbook. You know, if I wanna filter on every query that interacts with guestbook, I can do that and then look at it like a comparative time range. So if I wanna filter down on any sort of query text, I can do that here. So that would be your kind of way to make sure you can identify a specific query or specific subset of queries.

All right, cool. Another question I kind of forgot about for notebooks. Something else I wanna ask, do you have libraries and examples?

We do, yeah. So I’ve jumped up here a couple of times. These are your templates, so you can start with a blank template where you can kinda get started and make something yourself. But these are the ones that are customers that the folks we talked to on on sales calls, would find most valuable. So our CS team, has put together these, where again, if I kind of use one of these, it starts populating for my environment. I can make small tweaks but–

So if I deploy one of those, it will start populating automatically for you.

Right, so the one we popped up here earlier was environment inspection for MySQL.

And this is the markdown?

Right exactly. So if I go ahead and preview it, we’re gonna reflect the specific system metrics, these resource metrics, for the environments that we’re looking at. so this is the environment we’re looking at. This is the MySQL host that we have selected.

And you mentioned the postmortem, so I was gonna ask, so with this type of ability to create a notebook, do you have customer stories where they tell you, look, I was a few clicks away. I created this particular notebook visualization. I got in front of the manager or the person who actually holds the purse strings and said, Hey, yeah, how much, like does that have that value?

So the flexibility in notebooks is incredible. I think a lot of our customers have used it pretty well. These are the actual templates the ones we’ve created are ones that customers have given us feedback and said this worked for us.

Awesome. That’s what I was looking for. So I think we’re gonna cut looking for some questions. I’ll have help from the moderators if you have some to bring up. In the meantime, I’m gonna talk a little bit about some of the key points just to review. So again, DPM, focuses on your open-source platforms. So we’re talking PostgreSQL, MySQL, Redis, Aurora, and it complements the offering that we have for Database Performance Analyzer, which is your historical relational database platform. So your Oracle, your SQL Server, your Sybase DB2, God, we do a lot. We do RDS as well, we do Azure SQL Database, we can do it all. So that’s one of the key differentiators. Another one of course is software as a service. So subscription-based model is how you get to DPM. For DPA, it’s traditional software licensing. DPM, primarily customers are Dev and DevOps and some DBAs, where DPA, it’s primarily a lot of DBAs. Then some developers, some DevOps. Sometimes I hear people they wanna deploy us just to a operation center, cause they just need to know red, green, blue, and who to call next. And then that person ends up using our tool to do a little more diagnostic information. DPM has all of these dashboards are customizable?

Yes, they are.

Yes. That’s what I thought. So that’s another slight differentiator. DPA, you can make some customizations and if you can crack open some XML, but it’s not as user friendly. But DPM is very user friendly when it comes to creating your own dashboards. And of course the notebook feature is just amazing. If you need more information, I would tell you go to solarwinds.com/database-performance-monitoring-software. Do we have some questions?

We do. I think you’ve covered it pretty well there. So the first question, we touched on this a little bit, is there security over the access to that we’ve been seeing on query details? For example, a financial firm, with personal corporate information, probably adhering to PCI compliance here as well, but they could show up in a query information, must be restricted. So we talked a little bit about this. We have some flags I’ll actually pull it up here so we can see it a little bit better. So right when we’re installing the host, I can jump up here and make sure that, for instance, I’m capturing a sample without that query text. And you know, typically if people are really worried about it, I tell them to start here. We do have ways to purge samples. So if you install incorrectly the first time, they should get rid of those. And then using config files you can get the whole way down to specific query text you wanna avoid. So you can whitelist, you can blacklist specific query text. And we do have a lot of folks in that space. So fintech, finservices, even healthcare that are adhering to HIPAA and PCI compliance as well.

Awesome, next question.

You briefly mentioned the data retention windows of three days of one-second granularity and 30 days of one-minute. What if my organization needs something different like 30 days of one-second and or six months of one-minute?

Are they customizable?

They are, they are customizable as I mentioned, even adhering to like HIPAA standards, I think you need to have like three years worth of data retention. So we have ways to customize it. Whether it’s the granularity and time ranges of the granularity as well as just the length of historical data retention. They are completely customizable.

Now if they needed to customize it by, database being monitored, would they need a different agent or can they, is it that granular?

You should be able to customize it by database.

OK, cool.

So we have a bunch of different config files and places we could make sure we’re only capturing and what we wanna capture as well as the historical data retention we wanna capture.

I would just assume the more data you capture means probably a bigger bill on the other end?

A little bit.

Exactly, if the CSO comes to you and says I need seven years of data. You should remind them, that’s might be a bit more of a cost cause that’s how subscriptions work. All right, next question if there is one. I think so.

Yes, we do have another question. Can you go over areas of the product where you can compare metrics? So we talked and showed a little bit about profiler comparing those metrics. You know, Explorer, I like to call it MS profiler on steroids so we can jump to Explorer here and get kind of those same views here that we were looking at earlier. But we also have the ability in here to compare time ranges. So not just that hour over hour that we were looking at for our top 10 queries by total time, but also our resource metrics. So if I wanna compare CPU hour over hour, or you know, it’s pretty customizable if I wanna compare a CPU, the most recent hour of CPU compared to one week prior, the exact same hour.

I’m gonna touch the screen real quick. So you’re showing me now, so this is for all queries and that spike and the CPU is the entire CPU for the hour. OK. Got it.

So this is the most recent hour here. This is the hour before and it looks like, this is pretty consistently like the top query.

Yup, exactly. OK.

And when you look, it looks like we have some queries dropping off, picking up probably from a code deployment that we’re pushing on our demo environment.

Awesome. Any last questions before we sign off?

Is the product licensed per user or per database instance? So today it’s per database instance, license is a database instance and we give volume discounts for those situations where we get into like microservices and stuff like that. And then we actually, it’s unlimited users. So you’re paying for the database instance, it’s unlimited users and you’re gonna get access to things like, which we didn’t touch on but SSO, so we have SAML, OAuth access to all that unlimited users. So if you wanna share something, instead of having to export it outside, just send a quick link that we have to VividCortex or DPM. And they can log in and make sure that we’re looking at exactly what we were looking at.

You mentioned microservices and volume what were you talking there?

We actually have a wide variety of folks that are kind of more modern applications like GitHub, where they’re deploying really frequently. Continuous integration, continuous deployment, you know, always iterating. But we work with a wide variety. So, we make sure that we try to be flexible in our pricing because we know we have more if you have microservice, you’re gonna have more instances, you’re gonna have like a service for application. We also work with folks that are, kind of in the process of decoupling and they can use us to do that as well.

Now, are the microservices usually in the development environment or people like spinning up a lot of stuff in production or?

So that’s another good point. So we have folks that don’t just monitor production environments. So you can logically separate them. Actually, if I jump up here, you can see how we can logically separate these. So it might be production, it might be staging, it might be development. And we can kind of watch it as we progress it and push it through to production. So you have the ability to make sure you do that. We actually have one customer that uses VividCortex to confidently push right to production. [Thomas laughs] They say they’re saving tons of money. So it’s pretty impressive right to production.

I do all my best work in production.

You’re less likely to make mistakes.

Did we get one more question I think or no?

I think there’s something coming in. As a monitoring engineer who is neither a DBA nor a DevOps person, is DPM a good choice to try? I think also one of the big differentiating factors of DPM is that we try to make it usable for everybody. So not just your expert who can jump in here, and knows exactly where to look, but we try to give you those templates, good use cases to use to be able to identify opportunities for improvement. So we have like more than novice. We have experts using the product a wide, a wide range of folks that find value out of DPM.

Awesome. Any final questions before we wrap this up? I see somebody else coming through.

The product seems to scale really easily, but how useful is it for looking at smaller one-server environments? Just as valuable. So it’s one of our use cases here is the ability to kind of have everything in one view. So whether it’s different database types, whether it’s a high volume of a specific database type, if it’s one database instance, we have tons of customers that have started that way and we’ve scaled alongside them and we have probably 50% of our customers that have one or two licenses and is monitoring one or two databases. So in those situations, still really valuable. Just kind of at a smaller scale and heightened so you can kind of put a magnifying glass, against those two databases or the single database that you’re looking at.

All right. I think we’re good. I think we’ve answered all the questions that have come through. I want to thank you Hansel for being here again today.

Thanks for having me.

Yeah, no problem. Come back anytime.

All right, I’ll do that.

See, this wasn’t so bad.

Not too bad.

And I wanna thank you for watching us here on SolarWinds Lab. [electronic music]