IT industry veterans have seen significant changes in the way applications are not only developed but also used. This evolution has been driven by several factors, including the ever-growing need for mobility, vast ecosystems, and the increasing demand for faster and more efficient ways to get work done. Let's take a closer look at how apps have evolved over time.

A Brief History of Application Evolution

First generation

First-generation application architectures were basic and simple. Think of the client-server type of applications people use within their offices (Microsoft and its Office suite immediately come to mind). Computing then was very centralized; data wasn't being exchanged between organizations or moved around from where it originated.

Three-tier architecture

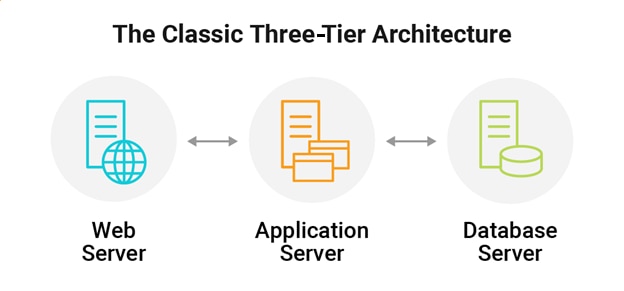

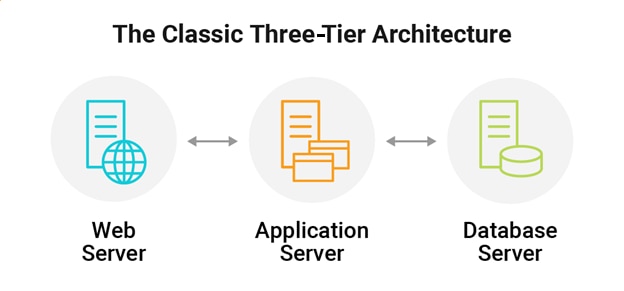

Then came the three-tier architecture. The classic example contained a web front end, an application, and a database backend. This is where we saw the private data center really begin to expand, providing services to its users, both internal and external.

One important thing to keep in mind whenthinking about application evolution is that apps don't run in isolation. There are many infrastructure components it depends on, including network, storage, and compute. All these areas have also seen significant evolutions along with the apps they serve.

Digital transformation

Next came the digital transformation age and the cloud's rise, which could provide infrastructure capabilities much faster. This sped up application evolution even more.

Service-oriented architecture

Then we entered the era of Service-Oriented Architecture, or SOA. SOA is a design approach for organizing and modularizing application functionality into small, independent, reusable services. By separating applications into smaller services, SOA allows individual services to be developed, tested, deployed, and reused independently. This helps reduce the overall complexity of the application and makes it easier to change and update individual services.

Microservices and containerization

SOA led to new development processes like

microservices and the containerization of applications, and the rise of greater automation. The downside of this evolution was increased complexity. Looking back, client-server and even three-tier architectures were pretty simple to troubleshoot when something broke or wasn't working as expected. Software development, although slow, could keep up.

Modern application trends to know

Since the speed of development isn’t an issue anymore, and with the increase in cloud adoption via AWS, Azure, and other platforms, applications are now being developed much more rapidly. Developers and

DevOps teams use many cloud services and components due to their ease of use and simplicity.

But that negative aspect of increased complexity is rearing its ugly head again, making troubleshooting and monitoring orders of magnitude harder. Metrics have become more important, too, to assist in these efforts.

Given that we understand how applications have evolved in recent years, let's look at some up-and-coming trends set to revolutionize applications again.

Edge computing

Edge computing involves bringing compute and storage closer to the end user, i.e., the "edge" of the network. This can be done in a variety of ways, including by deploying servers and other hardware near users or using distributed data architectures that place data closer to the source.

This evolution brings new challenges because, once again, we are fundamentally changing the way applications are architected and deployed, which means it's essential to ensure we have a clear understanding of what is going on.

Machine learning and artificial intelligence

With this need to have a better understanding of application performance brings us to machine learning (ML) and artificial intelligence (AI). They've become popular because they offer a way to automate the process of learning from data, from crunching numbers to making operations more efficient.

The huge amounts of data generated make it difficult for operators to determine where things have become problematic. ML and AI have caused an enormous evolution in data analysis, and they go hand-in-hand with the evolution in application architectures.

Why applications need AIOps and observability

As system complexity increases, insights into how applications perform becomes even more important—it's no longer as simple as looking at a single server. In the IT industry, this has led to the relatively new field of AI Operations, or

AIOps. The powerful abilities of AIOps can help you peer into an environment to find any current or potential issues far quicker than any human ever could.

The growth of AIOps has also led to a need for better, more comprehensive, and faster monitoring: enter observability. Without observability, troubleshooting becomes an incredibly slow, prolonged, and laborious process, stopping businesses from innovating as they fight fire after fire.

Given the constantly increasing complexity of application issues, it's clear why AIOps + observability has become a necessity for organizations. By leveraging AIOps alongside observability, businesses can more easily address the overall complexity associated with managing and optimizing modern applications. You can learn more about how observability and AIOps are transforming the world

here.

If you're looking for an observability solution built to give insight into an application in any stage of evolution, from more traditional data center applications to cutting-edge technology like Kubernetes and microservices, take a look at the observability solutions built on the

SolarWinds Platform. For comprehensive application observability designed to go beyond basic metrics, traces, and logs to help you ensure the availability and performance of cloud-native, custom application and microservices, you can learn more about

SolarWinds Observability. For end-to-end visibility across on-premises and multi-cloud environments,

SolarWinds® Hybrid Cloud Observability can provide a deep, holistic view across the network, cloud, infrastructure, application, and database.

One important thing to keep in mind whenthinking about application evolution is that apps don't run in isolation. There are many infrastructure components it depends on, including network, storage, and compute. All these areas have also seen significant evolutions along with the apps they serve.

One important thing to keep in mind whenthinking about application evolution is that apps don't run in isolation. There are many infrastructure components it depends on, including network, storage, and compute. All these areas have also seen significant evolutions along with the apps they serve.